Artificial intelligence is now part of everyday life. People use it for writing, searching, answering questions and creating images. Chatbots, AI search tools and content generators have become common in work and daily tasks.

But there’s a surprising problem behind these systems.

AI doesn’t always give correct answers. Sometimes it says things confidently that sound right but are actually wrong.

This problem is known as an AI hallucination.

It matters because AI is now used in areas like education, business, healthcare, marketing and software development. If people trust every response without checking it, the result can be misinformation, poor decisions or misleading content.

In this guide, we will explain AI hallucinations in simple terms, look at real examples and learn how to recognize them when they happen.

Table of Contents

What Is an AI Hallucination? A Simple Explanation

AI hallucination happens when an AI gives information that is not true but presents it as if it is correct. The answer may sound clear and confident, so it can be hard for people to notice the mistake.

Simple definition

AI hallucination = When AI makes up information that sounds believable but is actually wrong.

Simple Example

Imagine you ask an AI chatbot, “Who invented the smartphone in 1998?” The AI might reply with something that sounds very specific, like saying the smartphone was invented by someone named John Smith in 1998 at Silicon Valley Tech Labs. At first glance the answer feels believable, but when you check the details, you may find that the person doesn’t exist, the company isn’t real, and the event never actually happened.

When an AI creates information like this that sounds convincing but isn’t true, it is called an AI hallucination.

Why Is It Called a Hallucination?

The term comes from psychology. In humans, a hallucination happens when someone sees or hears something that isn’t actually there. In a similar way, an AI hallucination happens when an AI system produces information that looks real but isn’t based on accurate facts.

This doesn’t mean the AI is imagining things like a human would. Tools such as ChatGPT simply predict the next word based on patterns in the data they were trained on. Because they rely on probability rather than true understanding, they can sometimes produce answers that sound correct but are actually wrong.

How AI Actually Generates Answers

To understand AI hallucinations, it helps to know how AI systems work. Most modern chatbots are built using Large Language Models (LLMs). These systems do not actually “know” facts the way humans do.

Instead, they learn patterns from huge amounts of text data and use those patterns to predict the next word in a sentence. Their responses are generated based on probability, not true understanding.

For example, if the input is “The capital of France is…”, the AI predicts the most likely next word and generates the answer “Paris.”

However, if the data the model learned from is incomplete, outdated, or confusing, the AI can predict the wrong information and produce an incorrect answer.

Real Examples of AI Hallucinations

AI hallucinations are not just theoretical. Many real incidents have been documented.

1. Fake Research Papers

AI chatbots have sometimes created academic references that do not actually exist. In some cases, the AI listed real authors but invented the paper titles and DOIs. When people tried to find these papers in academic databases, they could not find them. This can be risky because researchers might unknowingly cite sources that are completely fake.

2. Glue on Pizza Advice

In one widely discussed incident, an AI search feature from Google suggested adding glue to pizza sauce so the cheese would stick better. The information originally came from a joke posted on an online forum, but the AI presented it as if it were real advice. This shows how AI can sometimes misunderstand content from the internet and treat jokes or sarcasm as facts.

3. Fake Legal Case Citations

In 2023, a lawyer used ChatGPT to help prepare a legal document. The AI generated several court case references that sounded real, but those cases never actually existed. The lawyer submitted the document to court, and the judge later discovered that the citations were fake. This incident showed the risk of trusting AI-generated information without checking it first.

How Often Do AI Hallucinations Happen?

Many people think AI mistakes happen only once in a while. In reality, they happen more often than people expect. Studies show that hallucinations are fairly common in AI systems.

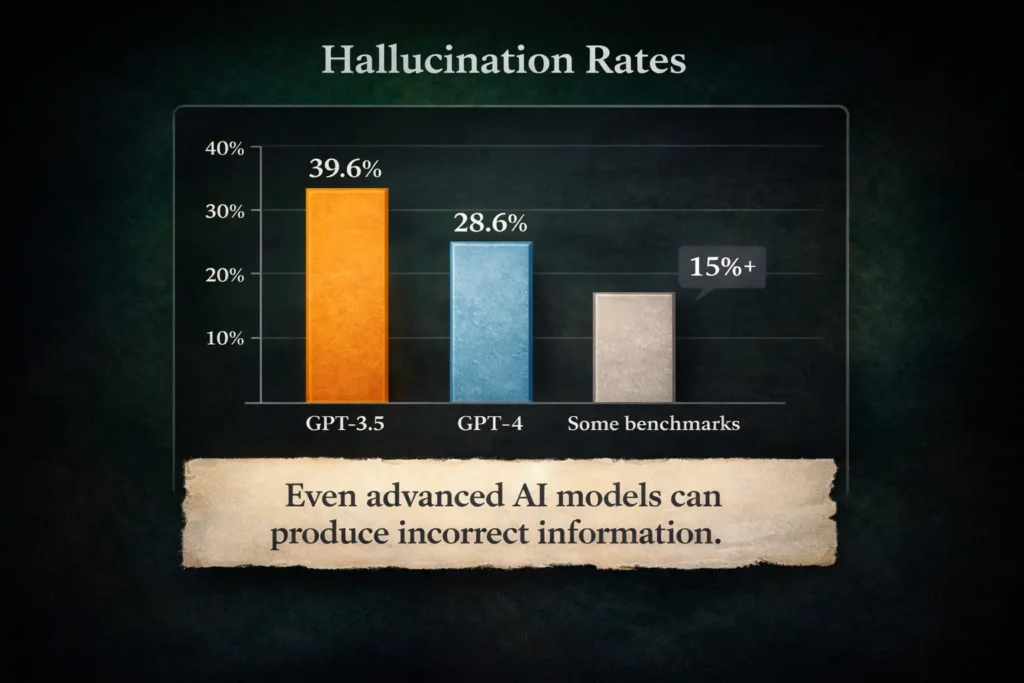

Some research found that hallucinations happened in about 39.6% of responses in GPT-3.5 and about 28.6% in GPT-4 for certain tasks. Other benchmarks show that even modern AI models can have hallucination rates above 15%.

Another study that looked at real user interactions found hallucinations appearing in around 31% of AI responses.

The exact numbers change depending on the AI model, how complex the question is, and whether the system uses outside data to verify information. But the takeaway is simple. Hallucinations are still a major challenge for today’s AI systems.

Why Do AI Models Hallucinate?

There isn’t just one reason. Several technical factors contribute.

1. AI Predicts Words, Not Facts

Language models are built to predict the most likely next word in a sentence. They are not designed to check whether the information is actually true. If something sounds like a reasonable answer, the AI may generate it even when it is wrong.

2. Incomplete or Biased Training Data

AI systems learn from huge amounts of data collected from the internet. But the internet contains misinformation, outdated content and conflicting information. Because of this, the model can sometimes produce inaccurate answers.

3. Overconfident Responses

Many AI tools are designed to respond to almost every question instead of saying “I don’t know.” This means the system may guess an answer when it is unsure, which can lead to incorrect information.

4. Unclear Questions

If a question is vague, the AI may misunderstand what the user actually means. For example, if someone asks “Explain Apple growth,” the AI might not know whether the question is about the company Apple Inc. or about how apple fruits grow. When the question is unclear, the AI can easily produce the wrong kind of answer.

Types of AI Hallucinations

Researchers usually categorize hallucinations into two main types.

1. Intrinsic Hallucinations

This happens when the AI gives information that directly contradicts the original source. For example, if a source article says the population of a city is 2 million but the AI summary says the population is 5 million, the AI has changed the fact from the original information.

2. Extrinsic Hallucinations

This happens when the AI adds new information that was never mentioned in the source. For example, the AI might say the city was founded in 1320 even though the original article never included that detail.

Why AI Hallucinations Are Dangerous

Hallucinations can cause serious problems when AI is used in important decisions.

Healthcare

If AI gives incorrect medical information, it could lead to dangerous treatment choices or wrong health advice.

Legal Industry

When AI creates fake legal references or case citations, it can cause serious problems in court and may even lead to penalties.

Education

Students who rely on AI for assignments may include incorrect facts without realizing the information is wrong.

Business and Marketing

If companies depend on AI-generated reports or data without checking it, they may make poor business or marketing decisions.

How to Reduce AI Hallucinations

Researchers and developers are actively trying to reduce AI hallucinations. Several approaches are being used to make AI responses more reliable.

1. Retrieval-Augmented Generation (RAG)

In this method, the AI first retrieves information from trusted sources and then generates the answer based on that data. This helps improve accuracy because the response is grounded in real information.

2. Fact-Checking Systems

Some AI tools check their generated responses against external sources to see whether the claims are supported by evidence.

3. Better Prompt Design

Clear and specific questions can reduce hallucinations. For example, instead of asking “Explain climate change data,” a clearer request would be “Summarize climate change statistics from reports published by the Intergovernmental Panel on Climate Change.”

4. Human Verification

For important decisions or sensitive information, human review is still necessary. AI should be used as a helpful assistant, not treated as the final authority.

The Future of AI Hallucinations

AI models are improving quickly. Newer systems tend to make fewer hallucination errors, but the problem has not disappeared yet.

Researchers are working on several ways to reduce these mistakes. This includes improving the quality of training data, developing methods that help AI recognize when it is uncertain, and building systems that can verify their own answers.

The long-term goal is to create AI systems that are not only powerful but also reliable and trustworthy.

Key Takeaways

AI hallucination is one of the biggest challenges in modern artificial intelligence. Here are the key points to remember:

- AI hallucinations happen when an AI system generates information that is false but presents it as if it were a fact.

- This happens because language models focus on predicting the next word in a sentence rather than verifying whether the information is true.

- Research suggests hallucination rates can range from about 15% to 40%, depending on the task and the model used.

- These errors can affect important areas such as healthcare, legal work, education, and business decisions.

- Methods like retrieval systems, fact checking, and human review can help reduce the problem.

Final Thoughts

Artificial intelligence is a powerful tool, but it isn’t perfect. Systems like ChatGPT, Claude, and Gemini can produce helpful answers, creative ideas, and quick summaries. At the same time, they can also generate information that sounds confident but turns out to be wrong.

Understanding AI hallucinations helps people use these tools more carefully. It reminds users to double-check important information and avoid sharing details that may not be accurate.

As AI continues to develop, the focus is not only on making models smarter but also on making them more reliable and trustworthy.

FAQ’s

What is the 30% rule for AI?

The “30% rule” is an informal idea suggesting that AI systems can produce incorrect or fabricated information in a noticeable portion of responses. It reminds users that AI outputs should always be verified rather than accepted blindly.

Why does ChatGPT hallucinate?

ChatGPT can hallucinate because it predicts responses based on patterns in data rather than true understanding. When information is unclear or missing, the model may generate an answer that sounds convincing but is not accurate.

How long do visual hallucinations last?

Visual hallucinations can last from a few seconds to several minutes. The duration depends on the cause, such as sleep deprivation, migraines, medications or neurological conditions.

What causes hypnopompic hallucinations?

Hypnopompic hallucinations happen when a person is waking up from sleep. They occur because the brain is transitioning from dreaming to being awake, causing dream-like images or sounds to briefly appear in real life.

0 Comments